Testing with AI

Via an integration with OpenAI, Reflect's AI features allow you to easily create tests that are resilient to changes in the underlying application.

AI Prompt Steps

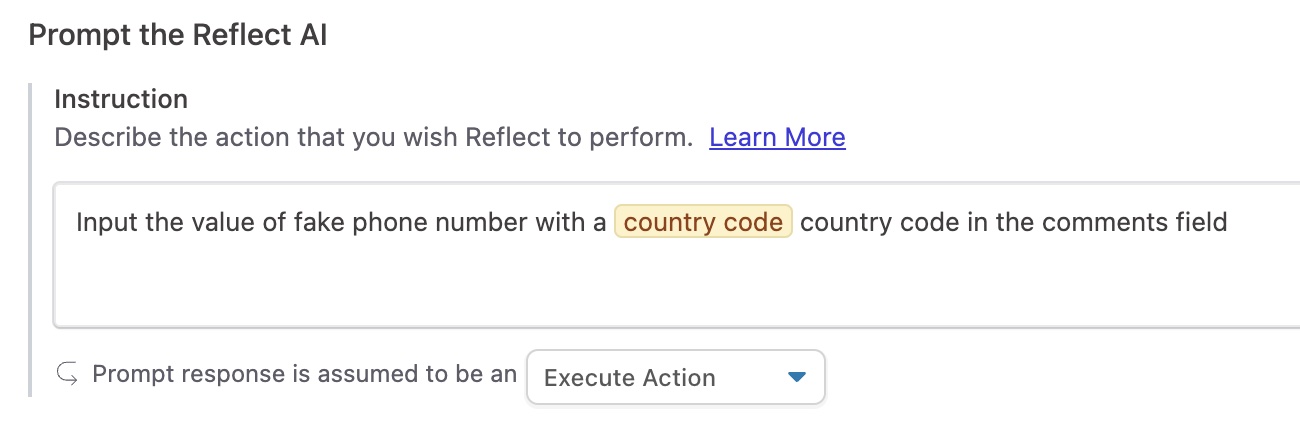

Test steps in Reflect can be created by either recording your actions in the Reflect recorder, or by describing what actions or assertions you want Reflect to perform using our “AI Prompt” feature. With AI Prompts, Reflect translates your English-language instructions into one or more actions or assertions that are executed just like any other test step in Reflect.

If you’re familiar with Behavior-Driven Development (BDD), this approach may sound familiar. One big difference between traditional BDD tools like Cucumber and our AI Prompt feature is that Prompt Steps are truly freeform; you can enter any instruction you’d like, and it need not conform to any pre-defined Gherkin syntax.

When executing a Prompt step, Reflect uses an integration with OpenAI to analyze the state of the page and determine what set of actions should be performed to complete the prompt instruction.

Prompt steps can be simple one-action instructions like Click on the Login button or

Input the value test@example.com in the username field. Prompts can also be assertions like

What is the amount associated with the Opportunity? or free-form questions like How many rows are in the table?. You

can also write prompts that represent multiple actions. For example, a prompt like

Fill out all form fields with realistic values could result in Reflect performing multiple actions, depending on how

many form fields are present on the page. Since Reflect consults the AI every time the test runs to determine what

actions to perform, a prompt like this will be resilient to changes over time, including the addition and removal of

form fields.

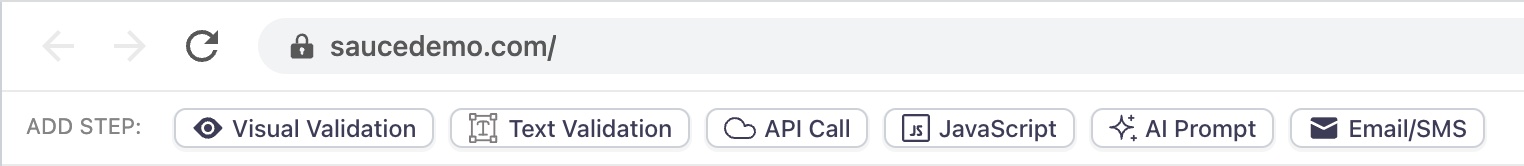

To add a Prompt Step, switch to Recording mode and click the ‘AI Prompt’ button.

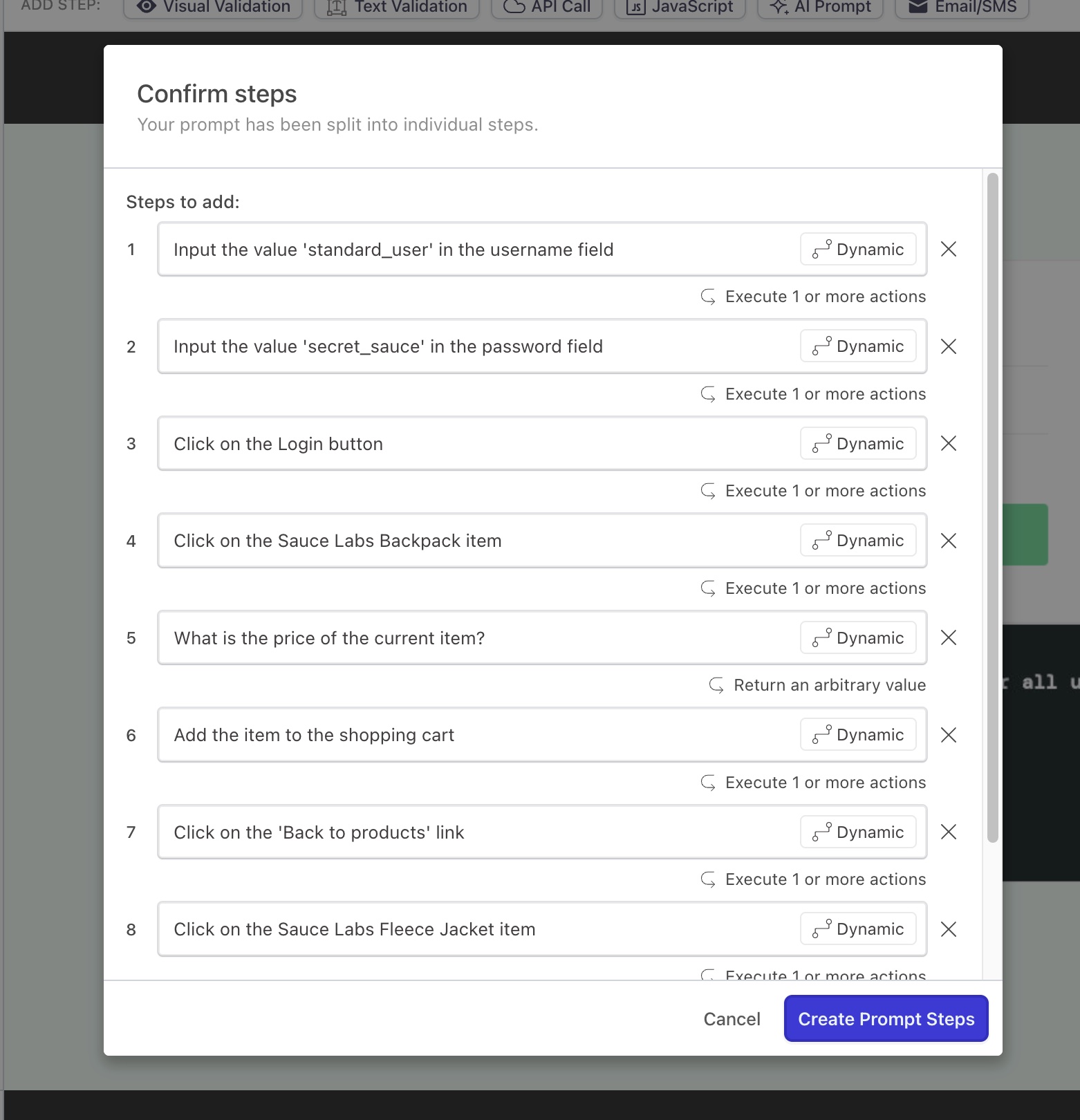

In the modal, type in the actions that you want Reflect to perform. Reflect will analyze the prompt and, if necessary,

split it into multiple steps that each represent a distinct instruction. For example, the following set of instructions

can be entered into a single prompt when testing the Sauce Demo sample e-commerce site located at

https://www.saucedemo.com:

|

|

This prompt will be split into multiple steps as shown in the screenshot below:

Making assertions using AI Prompts

In addition to executing actions, AI prompts can also make assertions about the application under test. Reflect will automatically detect whether an AI prompt should resolve to an action, a true/false assertion, or a question. You can change what type of result a prompt resolves to by clicking on the Prompt Step in the sidebar, and changing the following dropdown value:

Boolean assertions

Boolean assertions are prompts that evaluate to a true or false response based on the current state of the application under test. Examples of Boolean assertions include:

Assert that there are three rows in the data tableValidate that the total is less than 60 dollars

By default, Boolean assertions will fail if the result is false. You can reverse the expectation and assert that the result is false by clicking on the test step and switching the ‘Expected Result’ value from true to false.

Arbitrary questions

The Reflect AI is also capable of answering arbitrary questions about the state of the application. Examples include:

How many rows are in the data table?What is the error message associated with the email input field?What is the amount associated with the current Opportunity?

By default, Reflect will make no assertions against the answer to a question. However using Reflect’s Assertions feature, you can configure one or more assertions on the resultant answer, such as expecting an exact result on every run, or expecting the result to fall within a range of numerical values.

The following actions cannot currently be executed via AI:

- File uploads

- File downloads

- Drag-and-drop / swipes

- Text highlighting

- Interactions with native alerts/prompts.

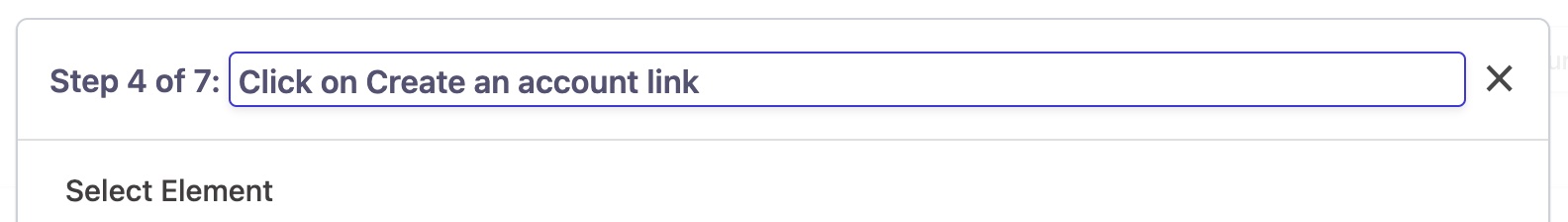

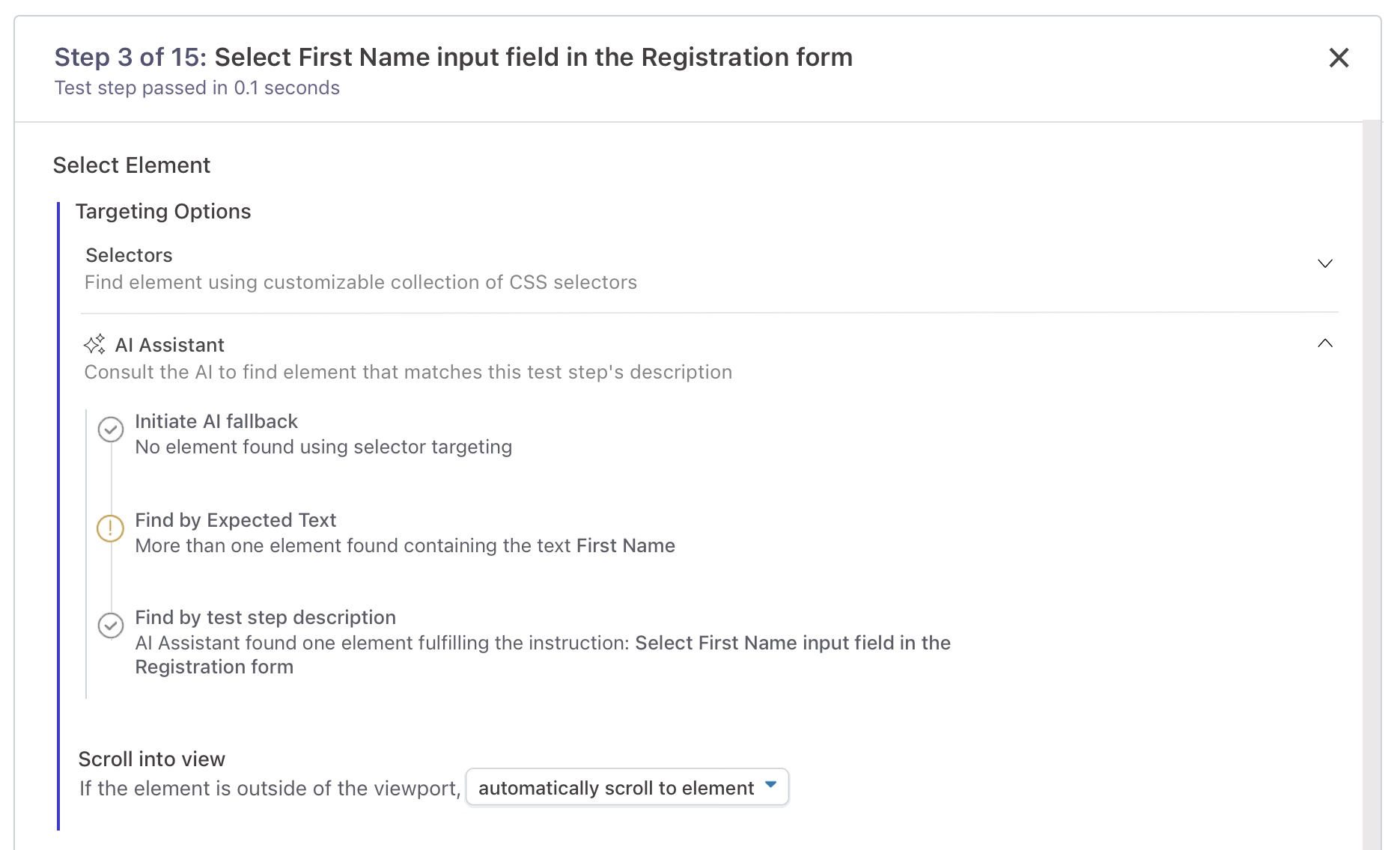

Preventing flaky tests with the AI Assistant

Test steps created through Reflect’s record-and-playback features also utilize AI to ensure they are resilient to changes in the application over time. When a record-and-playback step is recorded, Reflect will capture a set of selectors that will be used at runtime to find an element that fulfills the action. If no element is found using the selectors that were originally recorded, Reflect will use the AI Assistant to find the element instead.

The AI Assistant uses the Expected Text of the element and the test step’s description to find the desired element. This ensures that Reflect tests are as resilient as possible to significant changes in the underlying application, such as a page redesign or a large change in the page structure of the application.

Descriptions are auto-generated for every test step recorded in Reflect. In some cases, Reflect will not be able generate a description using the text associated with test step. When this occurs, Reflect will consult the AI to generate a test step description that describes the action that was performed. A brief animation is displayed on the sidebar to indicate that the AI has updated the test step description.

Every test step description is editable. This means that by improving the test step descriptions used in your tests, you not only make your tests more self-documenting; you’re making them resilient to changes as well!